Locking - Part 3: Modern Locking

Breaking the Model

In the previous article we looked at the traditional MultiValue locking model and also mentioned the three approaches to locking: pessimistic, optimistic, and merging. We also covered some of the traditional problems associated with enforcement, lock promotion and user feedback.

MultiValue developers have wrestled with these for a long time and their impact is generally understood: the widespread use of 4GLs and runtime clients has been a helpful factor in this regard as they generally offer a consistent approach to locking. It is when application move away from the traditional single-screen logins to the desktop and the web that the traditional locking model really struggles.

Persistence and Sessions

The locking model was designed at a time when, generally, entry screens did one thing at a time. The Basic language is effectively single threaded, and you can only run one program at a time. Users would start processing, do stuff, and finish a process before moving on to the next thing. Under this architecture, it was good enough for locks to be identified as owned by a specific user or session.

The cracks started to appear even with text based systems like SB+. This went some way to emulating a persistent forms application by allowing screen stacking, in which users could quickly open a new screen by entering shortcuts in any field, making for a very flexible experience (fig. 1).

Fig. 1 Screen Stacking in SB+

Unfortunately, it was also possible to jump back into the same screen at a lower level and to load the same record into that screen with no complaints from the lock system. As far as the database was concerned, you already own that lock, so you have the rights to that record. Suddenly you ran the risk of overwriting and losing your own updates!

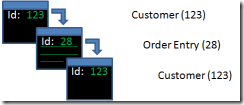

Now consider what happens in a modern, GUI-based system. Along with the many changes this introduces, possibly the biggest is the shift is to an architecture in which multiple forms can persist concurrently, all connecting through to the same session (fig. 2).

Fig. 2 Modern GUIs present multiple concurrent forms.

This type of architecture introduces three new problems.

First, the number of records visible is likely to increase: users are more likely to keep a number of screens open, increasing the number of locks held and potentially the length of time a lock is held. That in turn means a greater impact on the lock table and lock management.

Second, the locks are still owned by the session. As with the old SB+, you can open the same record independently in multiple forms without the locking system intervening at the database. This leads to potential loss or overwriting of updates unless your GUI explicitly blocks or warns against this in its own code by maintaining its own internal lock list or checking all open forms when a record is loaded.

The third problem is one of MultiValue database design. Traditional MultiValue applications traditionally link parent and child records using lists of multivalued foreign keys on the parent record. This differs from mainstream design where the parent id is held on the child and indexed to join the two together. Partly this is the result of historic accident — the lack of good indexing features on the older databases — and partly the nature of the enquiry language that uses file translations to pick up the details from related records, so using multivalued keys to look up the details of the child record from the perspective of the parent.

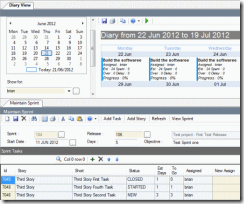

Consider an agile task management application like the one above. Here, a backlog of user stories are segregated into tasks and organized into sprints that in turn belong to releases. There are several hierarchies here, including:

Release -> Stories -> Tasks Release -> Sprints -> TasksIn an old-style design, the release record would contain a multivalued list of keys to related stories and sprints, both of which in turn would contain multivalued keys to tasks. But for a GUI this becomes more dangerous. What if the child and parent are both open in different forms as part of the same session? If you call a server side subroutine to add a new sprint, how does the copy of the release record held in the GUI know that the copy on the database has changed? The lock system shows them owned by the same session, so it will not intervene to tell you.

The solution is, of course, to separate out the index from the parent: adding a secondary index to the child record holding the parent key, as you would on a relational database (here being the release key on the sprint and the story) or breaking out the lists from the parent record and holding them in another file that is never directly loaded into the GUI, thus retaining consistency with the enquiry language that now has to do two translates instead of one:

Release -> Release/Sprint index -> Sprint ->Sprint/Task index -> Task Release -> Release/Story index -> Story -> Story/Task index -> Task

This keeps it all nice and neat, but introduces changes to the database design and involves more work in maintaining these — unless you want to move to a more restricted, primitive GUI in which the visibility of records is more limited and more in line with the older text based applications.

This, ironically, is often the case with web applications — though here the problems with the locking model are more obvious and sometimes more difficult to solve.

Stateless Applications

Web applications, web services, and other forms of SOA are designed to be stateless. Each and every call to the database exists in splendid isolation and it is not guaranteed that successive calls made from the same client will be handled by the same server process. In other words, the link between a user running an application and a database session goes out of the window.

Where the locking model is based on the concept of a user requesting an intent lock before performing any updates, and those intent locks are identified by the database session, this clearly is not going to work for a stateless application. Not only will the session requesting a lock be different than the session committing any eventual update, there is the question of lifetime.

In the traditional locking model, you can retain a lock for as long as you know a user is interested in updating a record, and release it when they are finished. In a stateless application you never know when the user has finished — they may start an update, give up, and close a browser window and you will be none the wiser. Unlike a terminal or client/server session, you never get a message to tell you the user has logged off and gone home. And writing soft locks to control access isn't a reliable option, even with timeouts.

For stateless applications, the only real options are to use optimistic or merge locking.

Optimistic Locking

Optimistic locking means allowing a user to perform updates against a local copy of a record in the hope that the underlying record has not changed in the interim. Merge locking means an expectation that the record may have changed, and taking appropriate steps to resolve the conflicts.

To perform either of these against a single record you need three things:

- An original copy of the record as it was when you read it from the database.

- The current copy of the record as held in the database for comparison.

- The current copy of your record with any changes to apply.

- From a processing point of view you need:

- A way to quickly and effectively identify whether intervening changes have taken place.

- A way to lock against other changes whilst applying any conflict resolution.

- A strategy for rolling back an update or for conflict resolution.

Locking in the Wider World

I began this series by quoting a U2UG post complaining that newer MultiValue developers don't understand locking. So it may not surprise readers that a frequent complaint amongst mature SQL Server developers (and that is almost as old as PICK!) is that younger programmers don't understand locking either. But to understand optimistic locking it is a helpful diversion.

If you have a copy of SQL Server or SQL Server Express and a copy of Visual Studio or Visual Studio Express, try the following:

First, create a database table with four columns as follows:

CREATE TABLE [dbo].[locktest]( [first] [int] NOT NULL, [second] [nvarchar](50) NULL, [third] [nvarchar](50) NULL, [fourth] [text] NULL, CONSTRAINT [PK_locktest] PRIMARY KEY CLUSTERED ( [first] ASC )

Second, create a new Windows Forms application and from the Data menu select New Data Source -> DataSet. When it asks you to select your tables for the DataSet, choose the locktest table above. Visual Studio will create a DataSet including the locktest table and generate Fill and GetData methods to populate it.

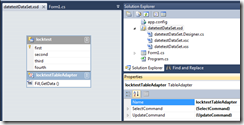

Now you're going to get Visual Studio to create an update method for you, such as you might do as a junior SQL programmer. Double click the DataSet in the Solution Explorer and navigate to the UpdateCommand for the TableAdapter at the bottom (fig. 3).

Fig. 3 Dataset with Update Command

Double click to see the query that Visual Studio has generated:

UPDATE locktest

SET first = @first, second = @second, third = @third, fourth = @fourth WHERE (first = @Original_first) AND (@IsNull_second = 1) AND (second IS NULL) AND (@IsNull_third = 1) AND (third IS NULL) OR (first = @Original_first) AND (second = @Original_second) AND (@IsNull_third = 1) AND (third IS NULL) OR (first = @Original_first) AND (@IsNull_second = 1) AND (second IS NULL) AND (third = @Original_third) OR (first = @Original_first) AND (second = @Original_second) AND (third = @Original_third)Ouch! All that just for one simple four column table — and as the table becomes more complex so the command becomes longer and more inefficient. What's more, this is only recognizing whether the underlying record has changed in the meantime, and will throw an error if it has.

Fortunately for SQL Server developers, you can add a new column to the table as follows:

DROP TABLE [dbo].[locktest] GO CREATE TABLE [dbo].[locktest]( [first] [int] NOT NULL, [second] [nvarchar](50) NULL, [third] [nvarchar](50) NULL, [fourth] [text] NULL, [sequence] [timestamp] NOT NULL, CONSTRAINT [PK_locktest] PRIMARY KEY CLUSTERED ( [first] ASC )If you now repeat the steps above you will see a much shorter statement:

UPDATE locktest SET first = @first, second = @second, third= @third, fourth = @fourth WHERE (first = @Original_first) AND (sequence = @Original_sequence)The timestamp column automatically stamps any changes made to the table allowing Visual Studio to recognize that an intervening update has taken place. Timestamp incidentally has no relation to date or time, but is just a measure of serialization. You can easily do the same with your own MultiValue database files by including an update stamp field for your own conflict checking though you must populate it yourself. Unfortunately, UniVerse users have no way to tell Visual Studio that a field is a timestamp column so it will continue to generate the same verbosity if you use any of the relational APIs.

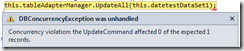

Recognizing that an intervening update has taken place is, of course, only the first step. Look what happens when you do some conflicting changes using the DataSet above (fig. 4).

Fig. 4 Concurrency Exception

Not very user friendly, but an improvement on previous releases that just threw an error to the user that their updates were not permitted. This is optimistic locking in the raw: seeing that the underlying data has changed and throwing out the update.

Conflict Resolution

Simply abandoning an update because a conflict has taken place is lazy, unacceptable, and likely to annoy your users. So is reloading the current version of the record and asking them to reapply any changes (assuming they remember them all) which is the other lazy form of optimistic programming.

Good optimistic locking, whether against SQL or MultiValue, is all about merging changes. This means re-applying all those changes made by the user (or process) to the current copy of the record that they made to the original copy, and ensuring that the record is pessimistically locked whilst those updates are applied (or you may just end up in the same loop again).

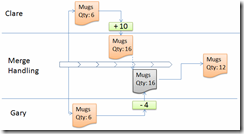

Back in the first article, we showed what happens when locking fails and two users (Clare and Gary) tried to update the same stock quantity. Because both had open copies of the same record, one of the updates was overwritten.

In a merge locking model, the update to the record would be noted as an action — in this case, adding or removing units from a stock total. The actions are then applied at the point when the records are saved to leave the record in a consistent state. Here's the original timeline rewritten to use merge processing: instead of Gary writing back the stock record only his change in volume is applied over the top of Clare's changes to leave the correct value (fig. 5).

Fig. 5: Merge Processing

It is important to remember that this does not mean simply applying the same data updates, but the same processing that led to those updates. Any derived values will need to be derived again using the current rules and data to ensure consistency.

What this really means is a separation of duties. Where traditional MultiValue programming may scatter updates through an entry screen or in flight during user interactions, with merge locking it is essential that the new data is serialized to be applied at the point of committing.

That, unfortunately, usually means code — and possibly lots of it, which is something to consider when budgeting for developing a web based application. Code spent in conflict resolution or segregated into commit processing can be verbose and costly, and is a major factor in the delivery of disconnected applications.