Wearable Tech: Going beyond Eyewear

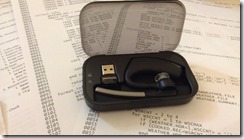

We sat down with Michael Holmlund of Plantronics to talk about the wearable tech his company provides and how to develop for it.

IS: So, Mike, how long have you been working on this sort of technology?

Michael Holmlund: I have a 15 year background in hardware/software ecosystem development — spanning across a variety of technologies from digital logic to power analog to wearable technology. In a previous life I was an analyst focused on developer relations and now I run a developer community for Plantronics: the Plantronics Developer Connection or PDC.

IS: For most people wearable tech is eyewear, Google Glass, Georgi's VISOR on Next Gen… what you are talking about is a little different. Can you explain?

Michael Holmlund: Of course. We just launched a new community in support of our latest wearable concept initiative called PLTLabs. Plantronics has been a player in wearable voice technology for over 50 years including participation in the first Apollo Moon landing. The first words spoken on the moon were actually through a Plantronics headset. That said, as our technology has evolved, we have added a variety of sensors and additional capabilities through firmware and software that now allow users to do a lot more than just transmit and receive audio. Today we support a number of products in our portfolio with free open APIs, and in addition to a very cool out of the box experience, developers can now actually hack their headset and improve their applications and business processes through device level integrations.

IS: Our readership is mostly doing IT in the business world. Can you give an example of how the PTL technology would be used — for example — in making company data work with your tech.

Michael Holmlund :We support four APIs that are being used across the enterprise in production today:

- Wearing state — via capacitive sensors in the device your applications can now determine if a user is wearing a headset or not. This information can be used for very accurate presence information, for example, or to escalate an IM session to voice.

- Proximity — we expose proximity events via Bluetooth connectivity. This means if you walk away from your workstation, your apps will know if you have left, and could forward voice calls to your mobile device, or even launch a security app that would lock your work station automatically.

If you have one of our legend UC devices, you can download a free trial app called smartlock in our developer community that will show you how to improve network security across your enterprise in a simple non-invasive way. (intl-spectrum.com/s1060) - Unique serial ID — Some of our devices have unique serial ID's which can be read out using this API — effectively converting your headset into an authentication token for multi-layered security protocols or shared resources — like you support public / shared terminals, or have deployed VDI across your enterprise.

- Mobile caller ID — Plantronics studies have shown that knowledge workers receives as many as 40% of their work calls on their mobile phones — higher for mobile professionals, like sales people. We have an API that uses the datapath in Bluetooth to pass the mobile caller id from your mobile phone to your applications on your workstation. This API is predicated on the ability of the PLT device to maintain an active pairing state with two devices - in this case, the user's PC and the user's mobile phone, simultaneously. Users can toggle between calls and use this API to pass data over the active connection.

This is in use and in market today with a number of our partners. One such example is Popcorn from Threewill which integrates with salesforce and Jive to pop screens with information about an incoming call on your PC screen before you even answer the call. You can get a free demo and more information at (intl-spectrum.com/s1061).

IS: Can you tell me a little about the process of using these APIs? Are they only available for one language? Can they work with non-SQL databases?

Michael Holmlund : Currently we have three major development languages a developer can use: C variants (C++ and C#) and JavaScript / REST. We also recently productized some Java wrappers to further facilitate integrations.

IS: PHP? Web service calls? Are those options?

Michael Holmlund: Our primary interface is REST, but we do have some use cases published utilizing PHP. The beauty of our device integration is that PLT allows users to benefit from simple state changes and events that are often fairly simple to integrate, which means there are generally several options open for any implementation.

IS: Published use cases... I guess that leads us to the community and support part. Tell us what people get when they join the community?

Michael Holmlund: We have a fully productized SDK which is built around the set of free open APIs. Our community can be located at http://developer.plantronics.com/welcome .

There are currently no barriers to entry to join our community, other than a simple, minimal registration process, to access the tools and technical documentation.

We are also now accepting registrations on PLTLabs from developers who are interested in getting their hands on one of our new Concept devices that incorporates a much more complex sensor module and supports greatly expanded capabilities.

IS: New devices. Let's talk about that.

Michael Holmlund: PLTlabs, our innovations team, has been doing demos with our latest wearable concept that does some very cool things. Our Concept 1 device, which is the platform that was featured at the Wearable Tech Expo in New York, incorporates a very powerful set of features and capabilities:

- Real-Time device orientation data stream

- Free-fall detection

- Pedometer

- Tap detection

- Yes/No gesture recognition

- Support for simultaneous voice and data

- Wear state (on/off)

- Proximity (near/far) to host devices

- Bluetooth (HFP and A2DP)

- Onboard MFi (Made For iOS) chip for data transmission over Bluetooth with iOS devices

We will be shipping these units to developers in the next 60 days with some initial tools to support integrations for iOS Android and Windows.

[Examples are in the video link to Scoble video with our CTO/VP engineering (intl-spectrum.com/s1062) and the blog (intl-spectrum.com/s1063).

With this platform we have already been exploring use cases with complex BT pairing implementations using Low Energy Bluetooth beacons to explore use cases around indoor navigation. And, even more exciting, using the gyros and accelerometers we have done a Google Streetview mashup that shows how someone wearing a headset anywhere in the world could replicate their precise view and location to anyone through Google Streetview. Uses could include EMS dispatch, who could guide fire and rescue real time around a site at night even if poorly lit or obscured by smoke and fire. We have started doing some really cold demos with spatial audio — enabling a user to direct audio around a room based on their head orientation and direction.

IS: It sounds like you have a real passion for this.

Michael Holmlund: I am extremely excited about what we are doing here at Plantronics, and PLTLabs. It's important to note that many of these initial experiments / building blocks are all aligned with some of the most fundamental problems that impact all knowledge workers in the Enterprise today, namely call transitions — getting from your home phone to your mobile in your car for the drive in, and then transitioning to a squawk box in a conference room, seamlessly and without sacrificing audio quality. The fact that our headset can pair with multiple devices, know where you are, and can enable spatial audio, will all help solve some of these basic issues that drive us all crazy.

IS: If you had to make an elevator pitch for why people should join your community and invest time in developing with Plantronics, what would you say?

Mike Holmlund: Plantronics has already shipped tens of millions of units of wearable tech. Our products are ubiquitous across the enterprise, and now we are solving some of the most important problems across the enterprise. Be a part of it. It's easy and free.