Auditing Database Changes with UniVerse Indexing Subroutines

The UniVerse database has supported file triggers for some time, not just on UniVerse SQL tables. They are fully compliant with the standard SQL model for triggers — before and after insert, update, and delete events; referential integrity; cascading; the ability to modify or terminate an update; and so forth. Obviously, supporting all this functionality involves a noticeable amount of overhead. But in the Real World, our traditional MultiValue applications manage all of this themselves. The primary use of file triggers I have seen is as a way to capture database change events in order to log them as part of IT governance auditing (SOX, HIPPA, etc.).

In UniVerse release 11.1, Rocket Software has provided us a way of using indexing subroutines to capture changes to a file. To completely understand how this new technique works, let's first review the normal use of indexing subroutines.

The purpose of an indexing subroutine is to derive the index value that is to be cross referenced with the record key. For example, say that we had a need to be able to quickly retrieve all of the purchase orders entered by a particular employee for a given accounting period. Accounting periods do not always follow calendar months. So for our particular industry, in order to determine the accounting period of a particular date, we might have to look up the information in a period table. We could have a subroutine whose job it was to take the purchase order date out of the record, look it up in the period table, and catenate the period with the user login ID. We then create an I-type descriptor in the dictionary that calls this subroutine then build an index on that I-type. Anytime we add, change, or delete a record, UniVerse is going to want to update that index appropriately.

In the case of adding the record (an "insert" in SQL terminology), the indexing routine calls our subroutine once in order to generate the <accounting period>*<login ID> value that is to have that purchase order number cross referenced to it. Likewise, when deleting a record, our subroutine would be called once to return the index value from which the purchase order number is to be removed.

Changing a record ("update") requires that our subroutine be called twice — once with the old data to determine which index value is to have the purchase order number removed and once with the new data to determine which index value is to get the purchase order number added to it.

One of the problems with cross-referencing, be it with indexes or the traditional XREF file, is what happens when the majority of records cross reference to the same index record. For example, if you were indexing on an order status field so that you could quickly retrieve all open orders, you would have an index record for the "O" status and another index record for the "C" status code (and, of course, various status codes in between). Assuming that the majority of your orders have been completed, you can see that the index record for the "C" status is going to be humongous. Updating this index record anytime an order goes to "C" status is going to take a lot of time. And it will continue to get worse and worse as it goes along. Eventually you get to the point where a user marks an order as completed and has time to go get a cup of coffee before they can do anything else with their screen.

What you would want to do in this case is to create your index on an I-type that returns the status code if it was anything other than a "C" and the null string otherwise. Then when you create the index, use the NO.NULLS option. This way, your completed orders (perhaps hundreds of thousands of records) would not be indexed in a single index record.

What does all of this have to do with auditing database changes? Directly, nothing. But once we understand the indexing mechanism and the purpose of an indexing subroutine, we can make use of it for purposes other than for what it was originally intended.

The general idea has been around since the days of Prime INFORMATION. The indexing mechanism is only interested in the value that your subroutine returns. It doesn't care about what your subroutine might do behind the curtain. You create an I-type in the dictionary of the file to be audited. This I descriptor does nothing more than call your subroutine by using theSUBR function.

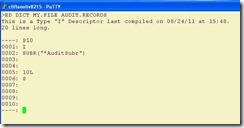

In the example shown in figure 1, this I-type descriptor will call the AuditSuber subroutine. (The * as the first character indicates that this is a globally catalogued subroutine. If you're not familiar with that concept, see the UniVerse documentation.) This subroutine will return the string value that is to be indexed, which will always be the null string. Now here is where the "trick" comes in. Create the index with the NO.NULLS option and build the (empty) index (fig. 2).

Fig. 1

Fig. 2

Since your subroutine will never return a value other than the null string, you have essentially used an index as a hook simply to call your subroutine, which will do anything that it wants to do including writing records to other files, and return to the indexing mechanism which will proceed to do… absolutely nothing.

So what does the subroutine do once it is called? In this case, we wanted to create an audit trail of what has been changed in the data record. The current data record is always passed into the subroutine in the @record system variable. So at first glance, it would appear that the job is relatively straightforward. There is, however, one glitch in the technique that has perplexed programmers who use it for years. How do you know why you were in your subroutine? If you have been called because a new record is being created,

@record will contain the new data that is about to be written to the file. If you are called because a record is being deleted, @record will contain the data that is about to be deleted. And if a record is being updated, your subroutine will get called twice, once with the old data and once with the new data. So how do you know what @record represents? Old, new, about to be added, or about to be deleted?

A number of techniques have been tried, usually with stashing things in named common, rereading the record from the disk (so much for efficiency), and trying to guess at what the time interval might be between the first and second call of an update. Frankly, most of these were kludges that were forced to sacrifice either accuracy or efficiency. So there was great rejoicing when true file triggers were introduced.

And then people started running benchmarks. And the rejoicing became somewhat subdued. All of that SQL compliant stuff adds a noticeable amount of performance overhead. So as an alternative, UniVerse 11.1 gives us another system variable to tell us what stage of the indexing mechanism is calling our subroutine. The variable is @idx.iotype. (Yes. These system variables can be uppercase: @RECORD and @IDX.IOTYPE, if all caps coding is your preference.)

When the indexing mechanism calls your subroutine @idx.iotype is set to an integer value from zero through four. (See figure 3 for the meaning of the five values.) Now when all we want to do is monitor and record database change events, rather than using triggers, we can go back to the lower overhead indexing subroutine technique and know exactly why our subroutine has been called and what we are supposed to do in that instance.

0 — Not being used from an index 1 — INSERT (new) record 2 — DELETE record 3 — UPDATE derive old index value 4 — UPDATE derive new index value

Fig. 3 Values of @IDX.IOTYPE variable

Figure 4 is a listing of a very simplistic auditing subroutine. In real life, you will probably want to be doing field level comparisons and more sophisticated recording of who changed what when where.

*Subroutine to demonstrate the use of using index subroutines to

*provide auditing of database updates.

*

*@idx.iotype = 0 - Not being used from an index;

* 1 - INSERT (new) record;

* 2 - DELETE record;

* 3 - Derive old index value;

* 4 - Derive new index value;

subroutine AuditSubr(returnValue)

common /auditing/ dictAuditTrail, fileAuditTrail, flagOpen, oldDataRec

equ nil to ""

equ otherwise to @true

systemMarks = @fm : @vm : @svm

printableMarks = "^]\"

if not(flagOpen) then

open "DICT","AUDIT.TRAIL" to dictAuditTrail else

return ; * to caller.

end

open "AUDIT.TRAIL" to fileAuditTrail else

return ; * to caller.

end

flagOpen = @true

end

begin case

case @IDX.IOTYPE = 0

null ; * Not being called from an index.

case @idx.iotype = 1

gosub LogNewRecord

case @idx.iotype = 2

gosub LogDeleteRecord

case @idx.iotype = 3

oldDataRec = @record ; * Save before image for next call.

case @idx.iotype = 4

gosub LogUpdateRecord

end case

returnValue = nil ; * Don't do any indexing.

return ; * to caller.

*--------------------------------------------------------------------

LogNewRecord:

logEntry = "N"

logEntry<2> = @id

logEntry<4> = convert(systemMarks, printableMarks, @record)

gosub WriteLogEntry

return

*--------------------------------------------------------------------

LogDeleteRecord:

logEntry = "D"

logEntry<2> = @id

logEntry<3> = convert(systemMarks, printableMarks, @record)

gosub WriteLogEntry

return

*--------------------------------------------------------------------

LogUpdateRecord:

logEntry = "U"

logEntry<2> = @id

logEntry<3> = convert(systemMarks, printableMarks, oldDataRec)

logEntry<4> = convert(systemMarks, printableMarks, @record)

gosub WriteLogEntry

return

*--------------------------------------------------------------------

WriteLogEntry:

readu dictrecAuditTrail from dictAuditTrail, "&NEXT.AVAILABLE&" else

dictrecAuditTrail = "X"

dictrecAuditTrail<2> = 1

end

idAuditTrail = dictrecAuditTrail<2>

dictrecAuditTrail<2> += 1

write dictrecAuditTrail on dictAuditTrail, "&NEXT.AVAILABLE&"

recAuditTrail = logEntry

recAuditTrail<5> = date()

recAuditTrail<6> = time()

recAuditTrail<7> = @logname

write recAuditTrail on fileAuditTrail, idAuditTrail

return

*--------------------------------------------------------------------

end

Fig. 4

The named common at line 11 serves two purposes. The first is the fairly typical way of avoiding reopening our audit file every time the subroutine is called. The second is to provide a place to store the "before" record data during the first UPDATE call so that we can compare it with the "after" data provided by @record in the second UPDATE call.

The case structure from lines 28 through 39 is a simple dispatch based on the value of @idx.iotype to the appropriate routine for the type of database change that is occurring. Note that in the case of @idx.iotype type being equal to 3, all we are doing is saving a copy of the old record in named common so that it can be used when we come back to the subroutine with @idx.iotype type equal to 4.

The rest of the code is just to save readable before and after images to our audit file for demonstration and testing purposes. In a Real World subroutine, this is where the actual work of doing record comparisons and building an appropriate audit entry would take place. The results of adding, changing, and then deleting a record with the editor is shown in figure 5.

Fig. 5

So does this eliminate the use of regular file triggers? Not really. In cases where you need to be able to change the data being saved to the file, be able to allow or disallow the update to continue depending on some set of security criteria, use the other SQL features, triggers are still the way to go. But where your intent is to simply observe what changes are being made to the database and record an audit trail of those facts, indexing subroutines provide a lightweight alternative.